|

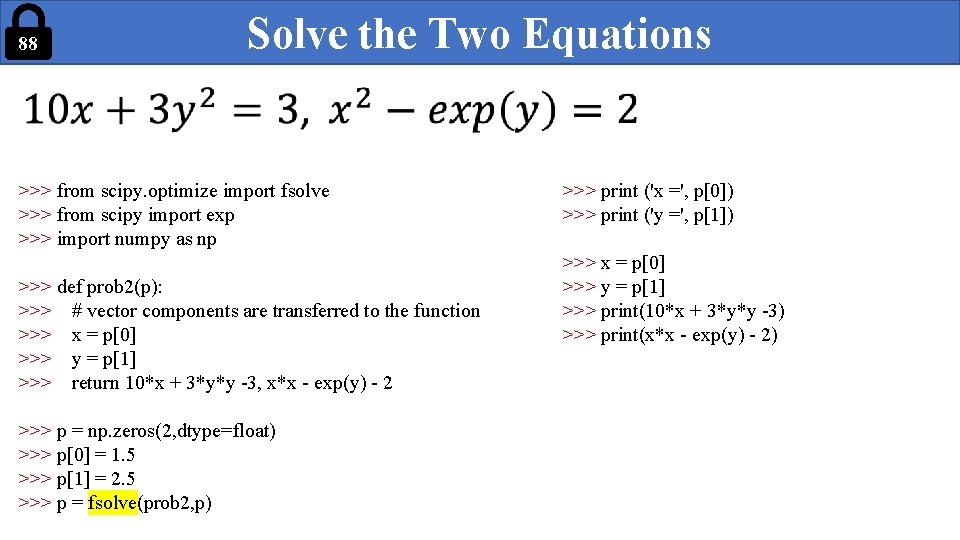

3/24/2024 0 Comments Scipy optimize

X.shape = n_x, p Y.shape = n_y, q U.shape = m_x, p V.shape = m_y, q Z.shape = 2m_x, 2m_yĬalculating the gradient and hessian from this equation is extremely unreasonable in comparison to explicitly deriving and utilizing those functions.

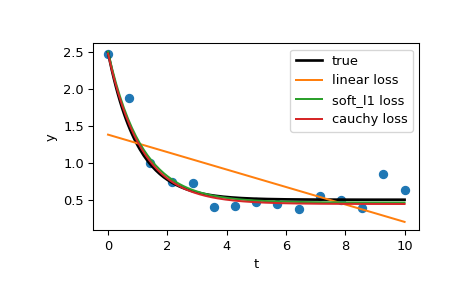

One fun example is the kernel based implementation of Inductive Matrix Completion. As such I always try to derive the functions explicitly myself, regardless of how difficult that may be. It really starts to add up with complex functions. %timeit minimize(fun, x0, args=(a,), method='dogleg', jac=fun_der, hess=fun_hess)īut you could calculate the derivative functions as such: def fun_der(x, a):Īs you can see that is almost 50x faster. For example with your method: x0 = np.array() I get that this is a toy example, but I would like to point out that using a tool like Jacobian or Hessian to calculate the derivatives instead of deriving the function itself is fairly costly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed